The Real Reason Zero-Downtime Migrations Fail

For 90% of mid-size and large enterprises, downtime costs exceed $300,000 per hour. For 41%, the exposure runs between $1 million and $5 million per hour [1]. At that scale, a maintenance window is a financial and contractual liability.

That exposure sits with one person. The senior architect or engineering director who has been told there are no maintenance windows, handed a legacy system whose full behavior is not documented anywhere, and asked to modernize it without disrupting the business.

The patterns are not the problem. What kills these programs is starting execution without the infrastructure to reach the end.

EY’s 2025 analysis of banking modernization programs found that dual-run periods extend 12 to 24 months beyond original estimates in the majority of programs [2]. That is not a launch failure. Programs stall after the pattern is chosen because parity validation stays manual, extraction sequencing creates re-entrant dependencies, and parallel run exit criteria were never defined in measurable terms.

This article covers the execution infrastructure that separates programs that reach cutover from those that compound costs indefinitely.

The Five Zero-Downtime Migration Patterns: What Each One Requires to Actually Work

The useful question is not which pattern to choose. It is what each pattern assumes the team has already built before it can function as designed.

| Pattern | Best Fit | What It Requires | Primary Failure Risk | Source |

| Strangler Fig | Incremental service extraction from a live monolith | Full dependency map; bounded context mapping before first extraction; façade resilience spec | Façade becomes a single point of failure if resilience is underspecified | Fowler [3]; AWS/Azure [5] |

| Blue-Green Deployment | Stateless application tiers; atomic environment switch | Clean state management; rollback path for stateful data; schema change strategy | Database schema changes and stateful rollback break the model in full legacy modernization | Fowler [3] |

| Shadow Mode | Parity validation without user-visible exposure | Production traffic mirroring; async output comparison; legacy source as truth | Incomplete behavioral coverage if comparison logic is underpowered or sampled | — |

| Canary Release | Staged traffic promotion; real behavioral evidence before full cutover | Minimum 72-hour soak per traffic increment for stateful services; defined promotion criteria | Premature promotion before stateful edge cases have had sufficient exposure | — |

| Parallel Running | Highest-confidence validation for regulated or high-stakes systems | Statistical exit criteria defined before parallel run begins; full business cycle coverage including peak and seasonal loads | Calendar-based exit criteria produce false confidence; parallel run extends indefinitely without statistical thresholds | — |

Every pattern on this list assumes the team understands the codebase well enough to sequence extraction correctly, has parity validation infrastructure capable of producing statistical confidence, and has a governance model that keeps both systems audit-ready throughout. Most programs have none of these before execution begins.

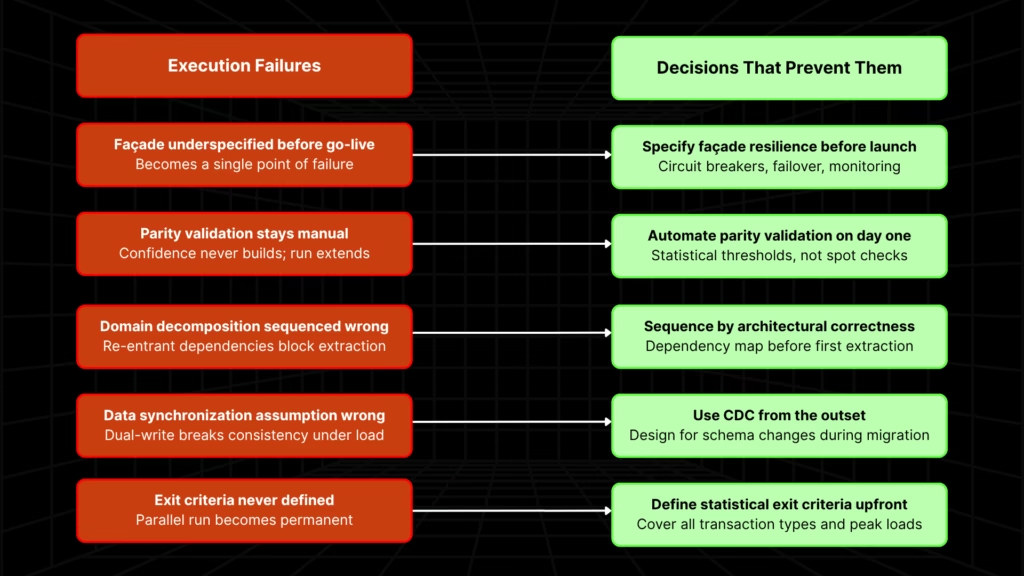

Why Zero-Downtime Migration Programs Stall: Five Execution Failures

Failure 1: The Façade Is Underspecified Before It Goes Live

The failure mode is design-time underspecification. Circuit breakers, failover behavior, performance budgets, and monitoring instrumentation are treated as operational concerns to address later.

When the façade fails, the application fails regardless of whether the legacy system and modern services behind it are healthy. AWS Prescriptive Guidance and the Microsoft Azure Architecture Center both identify façade-as-single-point-of-failure as the primary technical risk of the strangler fig pattern [5].

The façade must meet the same availability requirements as the system it fronts. That is a design decision, not an operational one.

Failure 2: Parity Validation Stays Manual and Confidence Never Builds

Parallel run exit criteria set by calendar (“run for three months and see”) produce false confidence and extend programs indefinitely. Manual spot-check validation cannot produce statistical confidence across millions of daily transactions.

EY 2025 documents that dual-run periods extend 12 to 24 months beyond estimates in the majority of programs [2]. The TSB Bank migration of 2018 illustrates the mechanism: edge cases suppressed by limited test datasets (customers unable to log in, orders mispriced, consent records missing) surfaced only under full production load after go-live [4].

Controlled testing environments cannot replicate production-scale edge case exposure.

For more on how accumulated undocumented business rules create the validation surface that manual review cannot cover, see our analysis of technical debt in legacy applications.

Failure 3: Domain Decomposition Sequence Is Wrong From the Start

The first module extracted was chosen for team familiarity or organizational convenience, not architectural correctness. Bounded contexts were not mapped before extraction began.

The extracted service ends up calling back into the monolith for data or logic it cannot own independently, like a re-entrant dependency that cannot be resolved without re-extracting the component from scratch.

AWS Prescriptive Guidance identifies unclear domain boundaries as a critical precondition failure for the strangler fig pattern [5]. The correct extraction criterion is architectural: cleanest domain boundary, lowest downstream dependency count, sufficient test coverage to validate parity.

The dependency map must exist before the first extraction decision and it is the input to every sequencing decision made across the program’s lifecycle, not a one-time upfront deliverable.

For tightly coupled systems where a single change cascades across modules, data, and downstream dependencies, this sequencing risk compounds at every extraction step.

Failure 4: The Data Synchronization Assumption Was Wrong

Most zero-downtime database migration failures trace back to a single architectural decision made early: dual-write vs. change data capture. Dual-write, where the application writes simultaneously to both legacy and modern databases, is dangerous for data consistency and appropriate for almost no system where consistency is paramount. The inconsistencies it produces appear under production load, not in controlled testing.

Change data capture is the established consensus for zero-downtime database migration. It must be designed into the program from the outset, with an explicit plan for schema changes during active migration. A CDC pipeline that breaks silently on a schema change mid-program creates a data consistency incident that is harder to resolve than any parity validation gap.

Failure 5: Exit Criteria Were Never Defined, and Parallel Run Became Permanent

Without statistical parity thresholds covering all transaction types, seasonal patterns, peak loads, and regulatory reporting windows, defined before the parallel run begins, there is no objective mechanism for deciding when cutover is safe. Infrastructure costs compound. Stakeholder confidence erodes. Every month of parallel run extension erodes the modernization ROI case, and that erosion accelerates the longer both systems run.

A structured assessment of your codebase produces the dependency map, domain boundary analysis, and sequencing readiness view that every decision in this framework depends on, before the first module is extracted.

Five Architectural Decisions That Determine Whether Your Program Reaches Cutover

Each failure mode maps directly to an architectural decision. Made correctly, and before execution begins, each decision eliminates the corresponding failure.

| Decision | The Correct Criterion | Failure It Prevents |

| First extraction sequence | Cleanest domain boundary, lowest downstream dependency count, sufficient test coverage — not team familiarity or organizational convenience | Failure 3 — re-entrant dependencies that cannot be resolved without re-extraction |

| Façade resilience specification | Circuit breakers, failover behavior, performance budgets, and monitoring instrumentation specified before façade enters production — not retrofitted after the first incident | Failure 1 — façade becomes a production liability when it should be a routing layer |

| Database ownership model | Shared database acceptable only as a transitional state with a funded, time-bound decommissioning plan; separated database with CDC infrastructure from the outset produces clean domain ownership | Failure 4 — dual-write breaks data consistency; CDC is the de facto method for zero-downtime database migration |

| Parallel run exit criteria | Statistical parity thresholds defined before parallel run begins — covering all transaction types, seasonal patterns, peak loads, and regulatory reporting windows | Failure 5 — without defined thresholds, parallel run extends indefinitely and the ROI case deteriorates each quarter |

| Compliance architecture for dual-system operation | Both systems maintain full compliance posture throughout parallel run — SOX provenance, HIPAA access controls, PCI-DSS security equivalence — funded and governed before parallel run begins | Audit surface doubles during parallel run; most modernization budgets do not account for this until it creates a regulatory exposure |

Every decision on this list requires information that the program cannot generate during execution. Extraction sequence requires a full dependency map.

Exit criteria require knowing what the full business cycle looks like before the parallel run begins. Compliance architecture requires a funded plan before the audit surface doubles.

How Legacyleap Provides the Execution Infrastructure Zero-Downtime Programs Are Missing

The patterns define the shape of the program. Legacyleap provides the execution infrastructure that makes it reach its end.

That means sequenced extraction grounded in full dependency visibility, automated parity validation that replaces manual spot-checking with continuous statistical evidence, and a governance model that keeps every transformation auditable across 18 months of dual-system operation.

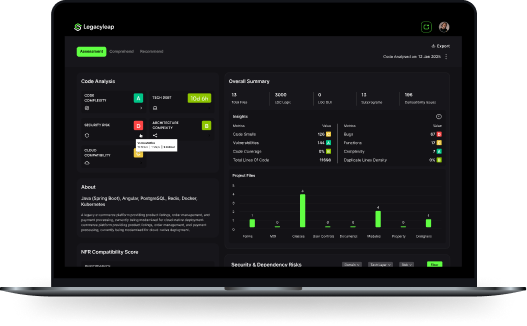

Assessment Agent: Extraction Sequencing (Failure 3, Decision 1)

Before a single module is extracted, the Assessment Agent produces a full dependency map and domain boundary analysis across the entire codebase. This determines extraction order on architectural grounds, not team familiarity or organizational convenience.

It is not a one-time upfront deliverable. It is the input to every sequencing decision made across the program’s lifecycle.

QA Agent: Automated Parity Validation (Failure 2, Decision 4)

The QA Agent replaces manual spot-check validation with continuous, automated parity verification. It produces behavior-parity checkpoints comparing legacy and modernized components, regression maps showing where test coverage is missing, and edge-case instrumentation for high-risk transaction flows.

It integrates directly with existing CI/CD pipelines like Jenkins, GitHub Actions, Azure DevOps, and GitLab, so validation runs as part of the delivery pipeline, not as a separate review cycle.

This is what makes parallel run exit criteria operational rather than aspirational. Statistical thresholds can be defined before the parallel run begins because the infrastructure exists to measure them continuously. Parallel run has a defined end.

Modernization Agent: Governed Transformation (Decision 5)

Every transformation is diff-based and human-reviewed. AI cannot merge, deploy, or execute code. Every change is auditable, reversible, and traceable, all reviewable before acceptance.

SOX provenance, HIPAA access control consistency, and PCI-DSS security equivalence are maintained through the audit trail the platform generates as a standard output, not a compliance layer added at the end of the program.

Deployment and Rollback

Canary, blue-green, and staged rollout deployment patterns are all supported. Deployment strategy is controlled by the client’s DevOps pipeline. Rollback readiness is built into every output: versioned artifacts, parity tests that catch behavioral mismatches before release, and graceful fallback policies configured to system criticality.

Proof of Execution

A UK- and Ireland-based fuel distribution software company ran a legacy VB6 platform the business depended on daily and could not take offline. The modernization to .NET required full dependency mapping before extraction began and automated parity validation throughout parallel runs. The program completed with zero downtime, 80% reduction in manual effort, 60% cost reduction, and 40% faster feature deployment after cutover

Another global credit reporting firm operating under non-negotiable data integrity requirements for production credit scoring faced 1.5 million lines of Ab Initio code that needed to move to a Java-Spark architecture without disrupting live operations. Full data logic preservation was the governing constraint throughout. The migration was completed with production uninterrupted, and all data logic was verified through automated parity validation at scale.

Zero-Downtime Modernization Succeeds When the Execution Infrastructure Exists Before It Is Needed

The patterns are proven. What is not proven, in most programs, is the execution infrastructure that makes them reach their conclusion: sequencing decisions made before the first extraction, parity validation in place before parallel run begins, and governance architecture specified before the façade goes live.

Every façade incident, every re-entrant dependency spiral, every parallel run that never ends traces back to a decision that was deferred. The cost of deferral compounds in infrastructure spend, stakeholder confidence, and a modernization ROI case that deteriorates each quarter the program stays in dual-system operation.

Incremental modernization is not a preference when the system cannot go offline. It is the only viable approach. And that approach requires understanding the codebase before extracting from it.

Request a $0 Assessment: Produces the dependency map, domain boundary analysis, risk indicators, and sequencing readiness view your program depends on. No cost. Concrete deliverables. The right starting point before the first module is extracted.

Book a Demo: See how Legacyleap governs extraction sequencing, automated parity validation, and governed transformation across a real program.

FAQs

Duration depends on codebase size, coupling complexity, and parallel run length. A well-scoped program with clean domain boundaries and automated parity validation in place can reach cutover in 9 to 18 months. Programs that run longer are almost always programs where exit criteria were not defined before parallel run began, not programs where the system was genuinely more complex. EY’s 2025 analysis documents dual-run extensions of 12 to 24 months beyond estimates in the majority of banking modernization programs.

Blue-green runs two identical environments and switches traffic atomically. The entire user base moves in a single cutover event. Canary promotes traffic incrementally, expanding the percentage routed to the new system as validation evidence accumulates. Blue-green requires clean stateless architecture and a resolved database schema strategy. Canary is more appropriate for stateful systems where production-scale edge case exposure needs to build gradually, with a defined soak period per traffic increment, before full cutover is safe.

Yes, and for business-critical systems that cannot go offline, incremental extraction is the only viable approach. The strangler fig pattern extracts bounded services from the live monolith without requiring a full rewrite before any component is replaced. What determines whether this works is the quality of the dependency map before extraction begins and the rigor of parity validation at each boundary. The risk is not in the approach but in starting extraction before the codebase is sufficiently understood.

It depends on where the failure occurs. A façade failure early in a strangler fig program typically means a rollback to legacy routing, which is recoverable if rollback readiness was built in. A CDC pipeline break mid-program is harder to recover from because the data consistency window may not be characterized until cutover is attempted. Failures discovered during parallel run are recoverable. Failures discovered after cutover, because parity validation was insufficient, carry reputational, regulatory, and financial consequences that extend well beyond the program itself.

The direct cost is running two production-grade environments simultaneously. For large enterprises, that overhead reaches seven figures annually. The compounding cost is harder to quantify: the modernization ROI case weakens each quarter, engineering capacity stays split across two codebases, and organizational appetite for reaching cutover erodes. EY’s 2025 analysis documents dual-run extensions of 12 to 24 months in the majority of banking modernization programs. The cost of not cutting over is ongoing.

Start by enumerating every transaction type the system processes, including edge cases and seasonal variants, not broad categories. For each type, define an acceptable divergence rate; financial systems typically require zero tolerance on money movement and regulatory reporting paths. Then define the minimum observation window needed to exercise the full edge case surface the legacy system has accumulated. Finally, identify the full business cycle (month-end, fiscal year-end, peak load periods) that must be covered before a cutover decision is executable.

References

[1] ITIC 2024 Hourly Cost of Downtime Survey — https://itic-corp.com/

[2] EY 2025 Banking Modernization Analysis (via Legacyleap blog) — https://www.legacyleap.ai/blog/application-modernization-guide/

[3] Martin Fowler — Strangler Fig Application and Blue-Green Deployment — https://martinfowler.com/

[4] TSB Bank Migration Failure, 2018 — documented across public post-incident reports including TSB’s own independent review

[5] AWS Prescriptive Guidance — Strangler Fig and Anti-Corruption Layer patterns; Microsoft Azure Architecture Center — Strangler Fig pattern and phased migration approach — https://docs.aws.amazon.com/prescriptive-guidance/ | https://learn.microsoft.com/azure/architecture/