Why Enterprise AI Projects Keep Failing

The numbers on enterprise AI failure are no longer surprising. They are, however, specific enough to warrant a different kind of conversation.

In 2025, 42% of companies abandoned most of their AI initiatives (up from 17% the year prior) with an average sunk cost of $7.2 million per abandoned initiative [1]. Fewer than 1 in 3 Gen AI experiments moved into production. Only 1% of executives describe their Gen AI rollouts as mature.

These are not early-adopter growing pains. This is a systemic pattern, and the cause is not what most post-mortems name. Infrastructure limitations account for 64% of Gen AI scaling failures [2]. The AI isn’t underperforming. The architecture it’s being asked to run on is.

There is a principle worth naming precisely:

AI does not create capability from dysfunction. It amplifies whatever the underlying architecture already is.

If the architecture is clean, AI scales. If it is fragile, AI exposes every crack faster and at greater cost than any previous technology layer would have. Call this architectural amplification.

It is the frame that makes sense of nearly every enterprise AI failure case, and it is what makes the infrastructure root cause so consequential, because no amount of model improvement closes an architectural gap.

The rest of this article covers three things in sequence:

- The specific mechanisms through which legacy architecture prevents AI from reaching production,

- What AI-ready actually means as a concrete, checkable architectural state and

- What an execution path looks like that doesn’t require a completed multi-year modernization program before a single AI initiative can deploy.

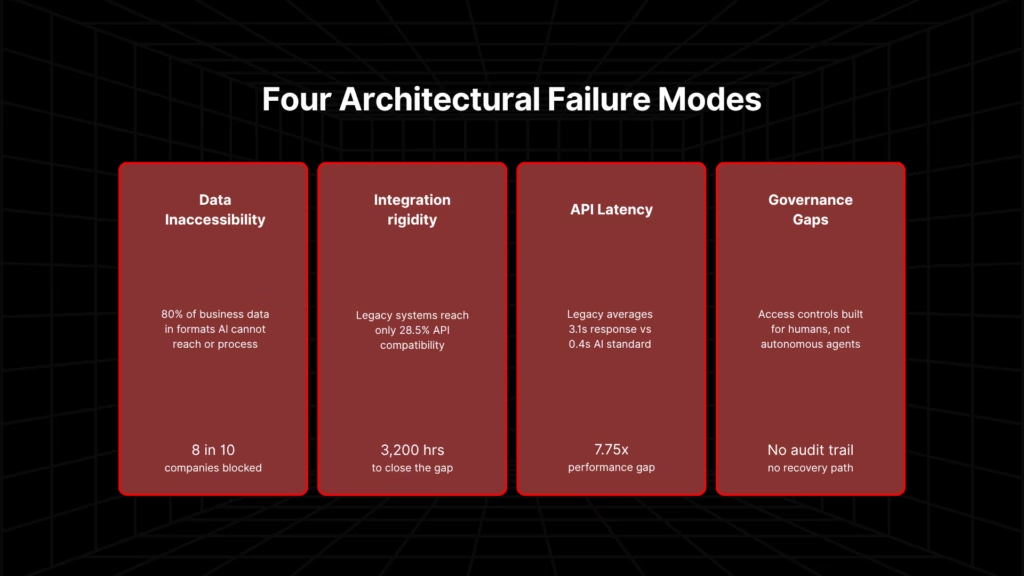

4 Ways Legacy Architecture Blocks AI in Production

Most accounts of why enterprise AI fails on legacy infrastructure stop at the aggregate. Infrastructure is named as the cause, and then the argument moves on.

What this section provides instead is the mechanistic account in four specific architectural conditions that prevent AI from leaving the POC environment, each with the data behind it.

Failure Mode 1: Data Inaccessibility

Eight in ten companies cite data limitations as the primary roadblock to scaling agentic AI [3]. Corporate databases typically capture only around 20% of business-critical information in the structured formats AI systems can process.

The remaining 80% exists in unstructured form such as documents, flat files, undocumented application state, and legacy reporting outputs that most AI systems cannot reach.

The structural reasons why legacy databases cannot serve AI at the speed and format it requires go deeper than most modernization plans account for – see what database modernization for AI involves.

Legacy architectures were not designed to expose data in the formats, at the speeds, or with the governance that AI deployment requires. Batch processing cycles introduce latency that breaks any agentic use case dependent on current data. Proprietary storage formats block extraction without custom intermediary layers. The AI is ready. The data is not.

In one financial services modernization, legacy ETL batch pipelines were the precise reason AI-ready data access was impossible until the underlying data infrastructure was rebuilt. Read the full account here.

Failure Mode 2: Integration Rigidity

Legacy systems achieve only 28.5% compatibility with the API integration endpoints that AI tools require [4]. Closing that compatibility gap demands an average of 52 custom API endpoints per legacy system (approximately 3,200 development hours) before a single AI use case can connect to the underlying system.

That is not integration friction. It is integration debt that must be paid before the AI project begins. And it is paid once per system, for every system the AI use case depends on.

In an enterprise with dozens of interconnected legacy applications, this debt accumulates before any model is trained or deployed.

Failure Mode 3: API Latency Incompatibility

Legacy API response latency averages 3.1 seconds. The standard required for reliable AI interaction is 0.4 seconds, a 7.75x performance gap [4].

For narrow, single-turn AI use cases, this gap may be tolerable. For agentic AI executing multi-turn workflows across systems, it is disqualifying.

In practice, agentic systems operating against enterprise legacy infrastructure succeed in well under half of multiturn tasks in production deployments. The failure is not in the agent. It is in the latency profile of the systems the agent is calling.

For more on what successful Agentic AI integration in the modernization lifecycle looks like, read: Agentic AI Application Modernization for Enterprise Systems.

Failure Mode 4: Governance and Observability Gaps

Legacy access control systems were designed for human users in role-based sessions. An autonomous agent handling a cross-system enterprise workflow has fundamentally different requirements: contextual, least-privilege permissions per tool call, not session-level access grants.

When an agent encounters a system that cannot fulfill a tool call cleanly, either because permissions are scoped incorrectly, or the endpoint doesn’t exist, or the response schema is inconsistent, it breaks down or behaves unpredictably.

In a legacy architecture, there is no observability layer, no audit trail of agent actions, and no recovery mechanism. The enterprise cannot see what the agent did, cannot explain why it failed, and cannot reproduce the failure reliably.

The Structural Diagnosis

Legacy enterprise platforms were architected for isolated models serving narrow use cases behind static API endpoints. Modern agentic AI requires an orchestration layer, governed real-time data access, and full observability across the execution graph. These are not features that can be configured into a legacy architecture. They are properties the architecture must be designed to support.

| Requirement | Legacy Architecture | AI-Ready Architecture |

| Data access model | Batch cycles, proprietary formats | Real-time, structured, governed |

| API surface | Partial, inconsistently documented | Complete, stable, versioned |

| Latency profile | 3.1s average response | Sub-0.5s for AI interaction |

| Access control | Role-based, session-scoped | Contextual, least-privilege per call |

| Observability | Application-level logs | Full execution graph traceability |

| CI/CD for AI | Not supported | Service-level independent deployment |

Why AI Pilots Succeed, but Production Deployments Fail

There is a pattern so consistent across enterprise AI programs that it has its own name: pilot purgatory. The POC runs beautifully. Stakeholders are impressed. Budget gets approved. And then the production deployment stalls, quietly, while the team cycles through explanations that avoid naming the real cause.

The real cause is always architectural.

POC environments are controlled by design. Data is curated and cleaned before the demo. APIs are mocked or simplified. The agent runs against a subset of systems that were chosen because they work. None of that is true in production.

In production, the AI encounters the actual architecture. Batch processing cycles mean the data the agent needs was last updated six hours ago. Undocumented legacy modules contain hardcoded business logic that was never exposed as an API because no one anticipated anything would need to call it programmatically.

The observability layer that would tell you what the agent did and why it failed does not exist. The CI/CD pipeline that would let you deploy a model update independently of the application it runs on was never built.

Each of the four failure modes described above is invisible in a POC. Every one of them appears in production. This is not a sequencing problem or a project management failure. It is an architectural gap that no amount of sprint planning closes.

The scale of this gap is consistent across the industry. Fewer than one in three GenAI experiments move into production. Fewer than 10% of enterprises have scaled agentic AI to deliver tangible value, despite roughly 65% actively experimenting. The models are not the variable. The infrastructure is.

Pilot purgatory ends when the architecture changes. Not before.

The Financial Compounding Effect

There is a budget dynamic that makes this structurally worse over time. Organizations already spend 60–80% of their IT budgets maintaining legacy systems, a cost breakdown that makes the modernization investment argument far more concrete than most leadership teams realize.

That spend is not discretionary. It is the cost of keeping existing operations running. What it leaves behind is insufficient engineering capacity to bridge a POC to production, because the bandwidth required to close the architectural gap is already committed to keeping the lights on.

Layering AI infrastructure on top of legacy systems without reducing the legacy footprint compounds the problem. Legacy maintenance costs stay unchanged. A new AI operating burden is added on top.

ROI on technology spend flattens, because every dollar of AI investment is partially offset by the cost of maintaining the architecture it cannot reliably run on. Nearly all organizations report that AI sprawl is already increasing complexity, technical debt, and security risk, not reducing it.

The result is a pattern where AI initiatives produce POCs that demonstrate value in isolation and then stall. The organization has spent real money on model selection, prompt engineering, and pilot infrastructure, while the architectural gap that blocked production deployment from the start remains untouched.

The Urgency Frame

The commercial window for demonstrating AI value is approximately two years. Enterprises that cannot show measurable AI returns within that window face board-level pressure to redirect budgets.

85% of senior executives at Global 2000 organizations already have serious concerns about their technology estate’s ability to support AI programs. 40% of agentic AI projects are projected to be abandoned by 2027 because the infrastructure it depended on was never prepared.

The cost of continuing to treat the production gap as a project management problem is measurable and time-bounded.

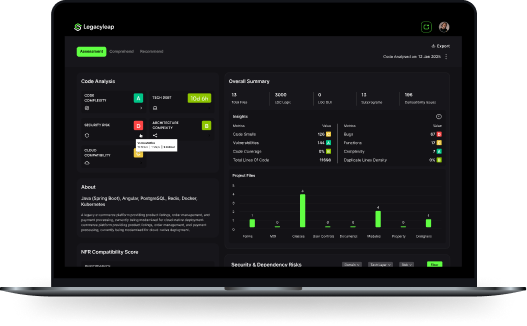

Most AI pilots stall because the architecture was never ready for production. Legacyleap’s $0 Modernization Assessment maps exactly where your systems will block deployment before your next sprint begins. Dependency map, risk indicators, and a modernization blueprint included. No consulting prerequisite.

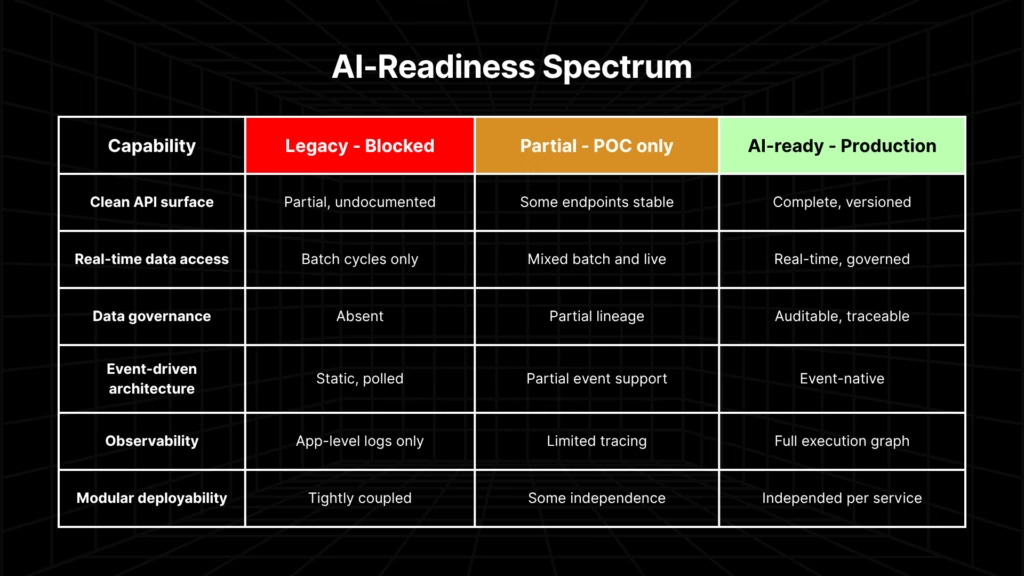

What AI-Ready Architecture Actually Looks Like: A 6-Point Checklist

Every article in this space describes why AI fails on legacy infrastructure. Very few define what the destination actually looks like with enough precision to be actionable. “AI-ready architecture” is used as an aspiration without a specification. What follows is the specification.

AI deployment at production scale depends on six architectural capabilities. These are testable conditions, not aspirational properties. An organization can evaluate their current systems against each one.

- Clean API surface area. Documented, stable, reliable endpoints that AI agents can call without custom-built connectors assembled per use case. Every custom connector is debt paid before the AI initiative started.

- Real-time data access. Not batch processing cycles. AI operating on data that is hours or days stale fails for any time-sensitive or agentic use case. The data must be accessible at the moment the agent needs it.

- Data governance and lineage. Quality-monitored, traceable data that AI outputs can be audited against. In regulated industries, this is a compliance requirement. AI systems that cannot account for what data they acted on cannot be trusted in production.

- Event-driven architecture. Systems that emit events AI agents can listen and respond to, rather than static workflows that must be polled. Polling introduces latency and coupling that breaks agentic execution at scale.

- Observability. End-to-end traceability across agent execution graphs. Required for production governance, compliance, and the ability to diagnose AI behavior when it deviates from expectations. Without this, production AI is a black box with no recovery path.

- Modular, independently deployable services. The prerequisite for CI/CD for AI model deployment. Tightly coupled services prevent safe AI deployment because a change to one component cascades unpredictably across the system. Independent deployability is what makes iterative AI delivery possible.

The performance differential between organizations that reach this state before launching AI and those that don’t is significant. Organizations that invest in data platforms before launching AI achieve 2.6x higher success rates [5].

Deliberate modernizers, those that direct the majority of application spending toward modernization and new capabilities, invest nearly twice as much in data and analytics as peers and consistently outperform on AI deployment outcomes.

For teams that want to know exactly where their systems stand against these six capabilities before committing to a modernization path, this is where that process starts. AI readiness is a Horizon 3 outcome, but only if modernization planning accounts for it from day one. For a structured approach to sequencing, see Application Modernization ROI: Three-Horizon Framework.

How Legacyleap Breaks the Chicken-and-Egg Problem

There is a structural trap at the center of the enterprise AI conversation: organizations need AI to modernize efficiently, but legacy systems prevent AI deployment. The loop is real, and every conventional response operates around it rather than through it.

| Conventional Approach | What It Requires | What It Leaves Unresolved |

| Consulting-led discovery | Months of human analysis before transformation | Findings become stale; transformation still requires a separate engagement |

| API wrapping | Working endpoints to wrap around | Legacy debt unchanged; custom connectors accumulate and degrade |

| Copilots / code assistants | Already-modern codebase to assist with | No leverage on legacy architecture; the underlying system is untouched |

| Legacyleap | Legacy system as-is — no infrastructure prerequisite | — |

Legacyleap breaks the loop through comprehension-before-transformation.

The Assessment Agent and Documentation Agent work on legacy systems as-is, including VB6, Classic ASP, ADO.NET, Web Forms, WCF, Java EE, EJB, JSP/Servlets, Struts, AngularJS, Ab Initio ETL pipelines and more, without requiring modernized infrastructure as a prerequisite.

The entire source code, dependency graph, and module structure is mapped before any transformation begins. For a deeper understanding of this, here is how Gen AI reads and comprehends legacy systems without requiring modernized infrastructure first.

The entire source code, dependency graph, and module structure is mapped before any transformation begins. Modernization that proceeds without this full-system context produces the tightly coupled architectures that are the primary reason AI agents can’t orchestrate across enterprise systems.

Once the system is fully comprehended, the Recommendation Agent identifies and prioritizes the AI-enabling capabilities that unblock the most use cases first. Clean API surfaces, real-time data exposure, event-driven components, and modular service boundaries.

AI initiatives become progressively unblocked as modernization advances, rather than waiting on a completed program before any production deployment is possible. This is the sequencing advantage that consulting engagements and point tools structurally cannot provide.

The transformation itself is governed by design. The Modernization Agent executes diff-based, human-reviewed code changes. AI cannot merge, deploy, or apply changes to production without explicit review.

Every change is reversible and carries a full audit trail. For teams in regulated environments, the legitimate concern when evaluating any AI-executed modernization platform is whether governance is enforced architecturally or simply promised in the documentation.

In Legacyleap, the diff-based review model is the enforcement mechanism, not a guardrail layered on top.

The QA Agent validates behavioral parity: the modernized system does what the legacy system did. AI initiatives built on progressively modernized foundations do not inherit silent regressions. Behavioral continuity is enforced through testing, not assumed.

The entry point is the $0 Modernization Assessment. It produces a dependency and module map, risk indicators, a modernization blueprint, architecture observations, effort and timeline ranges, recommended migration targets, and a structured modernization readiness view.

These provide a foundation for building an AI-enhanced modernization roadmap that starts from the actual state of the system. No consulting prerequisite. No modernized infrastructure is required to begin.

Legacyleap engagements report 40–50% reduction in modernization effort, with potential for up to 70% depending on stack and scope.

The AI Budget and the Modernization Budget Are the Same Line Item

The framing most leadership teams apply to modernization is that it competes with the AI budget. In practice, they are the same budget. The question is whether the spend produces compounding AI returns or absorbs the recurring cost of production failures.

When 60–80% of the IT budget is already committed to legacy maintenance, modernization does not add cost. It redirects spending from keeping the lights on to building capabilities that compound.

The AI budget is not being consumed by a model problem. It is being consumed by the architecture. Addressing the architecture problem is not a precondition for AI investment. It is the AI investment, made structurally rather than speculatively.

The planning horizon for this decision has compressed. Tech leaders accustomed to three-to-five-year modernization programs are operating in an environment where foundational architectural choices must be made in months.

The two-year window to demonstrate AI value is a board-level pressure point that is already active in most enterprises. The architecture decisions that enable or block AI production deployment are not future roadmap items.

For organizations that have AI initiatives currently in planning, the architectural readiness question belongs in this quarter’s conversation, not the next roadmap cycle. AI can’t learn from data it can’t access, because database modernization belongs on the same initiative list as every AI program currently in flight.

The cost of inaction is concrete: $7.2 million average sunk cost per abandoned initiative, a failure rate that nearly tripled in a single year, and a commercial window that narrows with every sprint cycle that produces a POC the architecture cannot promote to production.

The choice is not whether to modernize. It is whether to modernize in the right sequence, with governance, before the window closes.

Your leadership already has AI initiatives planned. The question is whether your architecture can support them in production or just in a demo.

The $0 Modernization Assessment gives you a dependency map, risk indicators, and a structured readiness view before you commit another quarter of engineering time to a pilot that won’t ship. No modernized infrastructure required to begin.

Get Your Free Assessment | See How the Platform Works

FAQs

The dominant cause is architectural, not technical. Enterprise AI projects fail at the infrastructure layer because the systems they are deployed on were never designed to support them. Batch data cycles, partial API compatibility, multi-second response latencies, and the absence of observability infrastructure each independently block production deployment. Together, they explain why fewer than one in three GenAI experiments reaches production, regardless of how well the pilot performed.

AI-ready architecture is a specific, checkable state. It requires six structural capabilities: a clean API surface that agents can call reliably; real-time data access rather than batch cycles; governed, traceable data that AI outputs can be audited against; event-driven systems that emit signals agents can act on; end-to-end observability across agent execution; and modular, independently deployable services that support CI/CD for AI model updates. Each of these is testable. An organization can evaluate their current systems against each criterion before committing to a modernization path.

For narrow, single-turn use cases against controlled data, yes. But with caveats. For agentic AI that executes multi-turn workflows across interconnected enterprise systems, the answer is effectively no. Legacy systems lack the API compatibility, latency performance, real-time data access, and observability infrastructure that agentic orchestration requires. The POC may work because it is scoped to avoid these constraints. Production deployment encounters them directly. The distinction is between AI that works in a demo and AI that holds up in the actual system.

Technical debt is the direct mechanism through which legacy architecture blocks AI deployment. When 60–80% of IT budget is consumed by legacy maintenance, engineering capacity for bridging POCs to production disappears before it can be allocated. The debt is financial and structural. Tightly coupled systems, undocumented APIs, missing observability, and batch-only data access are all forms of technical debt that surface as AI blockers the moment a deployment moves beyond a controlled pilot environment. Reducing technical debt in the right sequence by prioritizing AI-enabling capabilities first is what converts an AI budget from speculative spend into compounding infrastructure.

Because POC environments are controlled by design. Data is curated before the demo. APIs are mocked or simplified. The agent runs against a subset of systems selected because they work reliably. None of those conditions exist in production, where the AI encounters the actual architecture: stale batch data, hardcoded business logic never exposed as an API, missing observability, and no independent deployment path for model updates. The four architectural failure modes that are invisible in a POC (data inaccessibility, integration rigidity, API latency, and governance gaps) are exactly what production surfaces. The gap is not a project management problem. It is an architectural one.

References

[1] S&P Global Market Intelligence (2025). “AI experiences rapid adoption, but with mixed outcomes.” Survey of 1,006 enterprises across North America and Europe. Reports 42% abandonment rate (up from 17% in 2024) and $7.2M average sunk cost per abandoned initiative.https://www.spglobal.com/market-intelligence/en/news-insights/research/ai-experiences-rapid-adoption-but-with-mixed-outcomes-highlights-from-vote-ai-machine-learning

[2] MIT Sloan Management Review (2025), as cited in Pertama Partners, “AI Project Failure Rate 2026.” Infrastructure limitations account for 64% of Gen AI scaling failures.https://www.pertamapartners.com/insights/ai-project-failure-statistics-2026

[3] McKinsey & Company (April 2026). “Building the foundations for agentic AI at scale.” 8 in 10 companies cite data limitations as a roadblock to scaling agentic AI.https://www.mckinsey.com/capabilities/mckinsey-technology/our-insights/building-the-foundations-for-agentic-ai-at-scale

[4] Singh, European Journal of Computer Science and Information Technology (2025). Legacy systems: 28.5% compatibility with AI integration endpoints; average API response latency 3.1s vs. 0.4s AI standard; average 52 custom API endpoints required per legacy system (~3,200 development hours). Journal citation — no public URL available.

[5] Pertama Partners (2026). “AI Project Failure Rate 2026.” Analysis of 2,400+ enterprise AI initiatives: organizations that invest in data platforms before launching AI achieve 2.6x higher success rates.https://www.pertamapartners.com/insights/ai-project-failure-statistics-2026