The Tightly Coupled Trap

Tightly coupled application modernization is one of the highest-failure-rate initiatives in legacy transformation because teams start transforming before they understand what the system does.

These are systems where UI, business logic, data access, and integration layers have grown into each other over years of feature additions, hotfixes, and undocumented workarounds.

In tightly coupled architecture, a single change in one layer produces defects in places no one predicted. The only reliable documentation is often the institutional knowledge held by engineers who may no longer be available.

And the accumulated technical debt compounds the problem because each layer has accrued its own undocumented rules, its own implicit contracts, and its own workarounds that exist nowhere except in running code.

The pattern is consistent across stacks like VB6, .NET Web Forms, Java EE, Oracle PL/SQL. Business logic is not in one place. It is in every place. And the teams responsible for modernizing these systems are working against a knowledge-erosion clock as the people who understand the system retire or leave.

The sequence that works is consistent:

- map the full system first,

- reduce scope by eliminating dead code,

- define boundaries based on discovered logic,

- then modernize incrementally with continuous validation.

The rest of this article breaks down why that sequence matters and how to execute it.

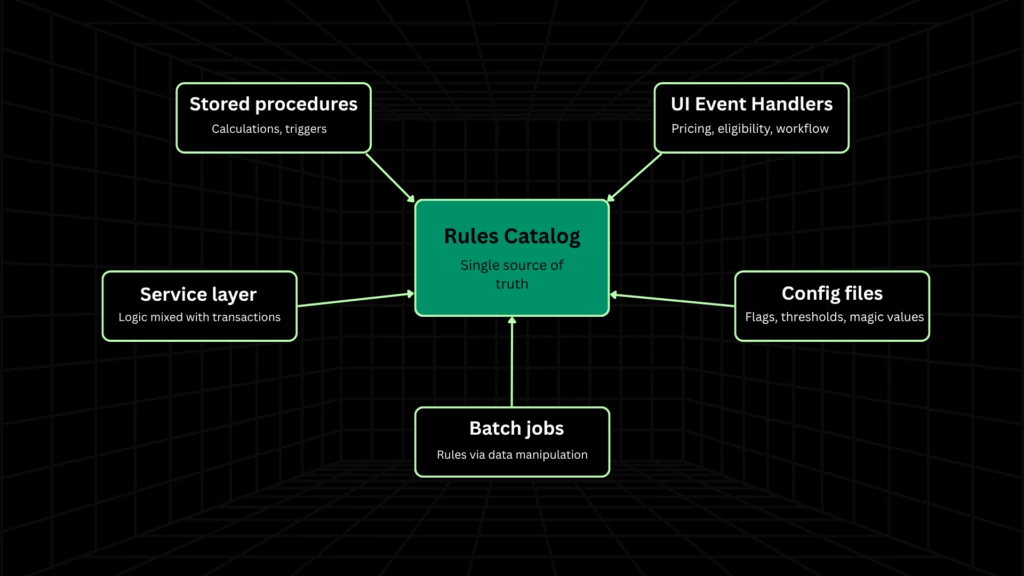

Where Business Logic Hides in Tightly Coupled Systems

Before any modernization pattern can be applied, teams need to know where business logic actually lives. In tightly coupled systems, the answer is: everywhere.

- UI event handlers: button clicks, form loads, and grid cell changes containing pricing rules, eligibility checks, and workflow logic

- Stored procedures and database triggers: calculation logic, cascading updates, referential integrity rules

- Application service layers: business rules interleaved with logging and transaction management

- Configuration files: magic values, flags, and thresholds that silently control business behavior

- Batch jobs and scheduled tasks: rules enforced through data manipulation rather than explicit code

The distribution pattern varies by stack, but the problem is universal: no single layer contains the full picture.

In VB6 and desktop applications, industry experience consistently shows that 40–60% of business rules live in UI event handlers alone. In Oracle-centric systems, logic spans stored procedure call chains across multiple schemas and packages. In .NET Web Forms, logic is coupled to page lifecycle events.

Any modernization approach that examines only the application layer will miss a significant share of the rules that govern how the system actually behaves.

Why Architecture Patterns Alone Don’t Solve This

The three most commonly recommended approaches for decomposing tightly coupled systems are the strangler fig pattern, Domain-Driven Design, and the modular monolith. Each is valid.

But none is sufficient on its own because each assumes a prerequisite that has not been met.

The Strangler Fig pattern

This works when you can identify clean, separable business capabilities and extract them incrementally behind a façade. In a tightly coupled system where logic is scattered across layers, you cannot define what to strangle until you know where the logic lives.

As Fowler and Dehghani’s canonical guide on monolith decomposition demonstrates, the pattern provides a sequencing mechanism for extraction, not the discovery work that makes extraction possible [1].

Domain-Driven Design

DDD provides bounded contexts for structuring a modernized system, but context identification depends on knowing what business rules exist and where they reside. DDD tells you how to model domains. It does not tell you how to discover rules buried in event handlers and stored procedures across a system with no current documentation.

The modular monolith

Restructuring a monolith internally into clearly bounded modules while keeping a single deployment has emerged as the practitioner consensus for the right first architectural move. It delivers roughly 80% of microservices benefits without the operational complexity of distribution.

But defining module boundaries still requires understanding which business rules belong to which domains.

For a deeper analysis of when each target architecture is appropriate, see monolith vs. microservices.

The common gap

| Pattern | What It Assumes | What It Provides | The Gap |

| Strangler Fig | You can identify separable capabilities | Incremental extraction sequencing | No discovery of where logic lives |

| Domain-Driven Design | You know what rules exist and where | Bounded contexts and domain modeling | No extraction from legacy code |

| Modular Monolith | You understand domain boundaries | Internal separation without distribution overhead | No mapping of scattered business logic |

The gap is always the same: you do not yet understand the system. All three patterns assume comprehension has been completed. None of them provide it.

This is why Legacyleap’s platform starts with full-codebase understanding before any transformation begins.

Comprehension First: Mapping What the System Actually Does

Most modernization failures for tightly coupled systems happen because teams start changing code before they understand what it does.

Comprehension is not a discovery workshop or a two-week assessment that produces a slide deck. It is the systematic work of:

- mapping the full dependency graph across all layers,

- extracting business rules from every location where logic lives,

- validating those rules with domain experts, and

- producing a documented rules catalog that becomes the source of truth for the modernized system.

Two-track extraction

This requires a two-track approach:

- Technical extraction: Static analysis, dependency mapping, and code visualization to identify where rules live. This includes conditional logic, calculations, validation routines, data transformations across UI, application, database, and integration layers.

- Functional validation: Domain expert review to confirm which extracted rules are still current, which are obsolete, and which have been superseded by off-system workarounds.

Neither track alone is sufficient. Technical extraction finds what the code does but misses business intent. Functional validation captures intent but misses edge cases that only exist in code.

Scope reduction as a direct output

Industry experience consistently shows that 30-45% of legacy codebases consist of dead code, unused features, and redundant components. Identifying and eliminating what does not need to be migrated is a concrete effort and budget savings before any transformation begins.

For programs where budget and timeline are the binding constraints, this is where comprehension pays for itself by reducing effective scope by a third or more before a single line of code is transformed.

Stack-specific extraction realities

The extraction approach differs fundamentally by source stack:

- VB6 / desktop apps: 40–60% of business rules live in UI event handlers like button clicks, form loads, grid cell changes containing pricing, eligibility, and workflow logic.

- Oracle-centric systems: Logic lives in stored procedure call chains spanning multiple schemas, packages, and triggers.

- .NET Web Forms: Logic is coupled to page lifecycle events, making extraction inseparable from understanding the rendering pipeline.

Evidence at scale

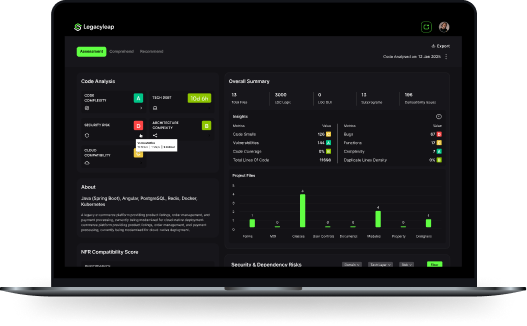

In a recent VB6 insurance modernization engagement, Legacyleap’s platform mapped 327 files and 242,613 COM calls using Gen AI, producing a complete dependency graph and business rule catalog.

A custom test framework was built during the comprehension phase, because for systems where business rules were never formally documented, the legacy system’s behavior is the only specification. Every extracted rule became a test case.

For a detailed walkthrough of how this applies to VB6 systems, see VB6 to .NET modernization.

The government case study documented by Wednesday Solutions reinforces the scale: 4.2 million lines of code, 11,000 business rules extracted in four months [2]. This is not a discovery exercise that fits into a sprint. It is the binding constraint of the entire modernization program.

How Legacyleap operationalizes this

Instead of manually mapping dependencies across thousands of files and interviewing stakeholders over months, Legacyleap’s Assessment and Documentation Agents execute this comprehension workflow at full-codebase scope.

The Assessment Agent maps dependencies, identifies risk areas, and detects dead code. The Documentation Agent extracts business rules from all layers and produces reconstructed documentation, workflow maps, and module-level descriptions.

For teams exploring how source code intelligence applies to large legacy systems, this is where it operationalizes.

If you want to see what this comprehension step reveals for your system, Legacyleap’s $0 Modernization Assessment maps dependencies, identifies risk areas, and produces a modernization blueprint, before any commitment.

The Modernization Workflow: From Boundaries to Production

Once business rules are extracted, mapped, and validated, the comprehension output becomes the input that architecture patterns need to work with.

The following sequence is not a menu of options but a progression, where each phase depends on the outputs of the one before it.

Boundary definition via DDD on discovered logic

Bounded contexts are defined based on business domains surfaced during comprehension, not on technical layers or module file structure. The rules catalog tells you which business rules belong together, which share data dependencies, and which can be isolated.

This is where DDD earns its value: applied to discovered logic, not to assumptions about where logic should live.

Modular monolith as the first move

Restructure the monolith internally into clearly bounded modules with defined interfaces, keeping a single deployment unit.

This is not a compromise. It delivers clean separation, domain ownership, and independent development without introducing the operational complexity of distributed systems.

For a broader view of how this connects to the full modernization lifecycle, see the application modernization framework.

The distributed monolith warning

A distributed monolith is a system where services are deployed independently but remain tightly coupled through synchronous calls, shared databases, or rigid dependencies, producing all the operational complexity of microservices with none of the benefits.

This is what happens when teams skip the modular monolith step and decompose directly into services without properly decoupling business logic. The root cause is always the same: insufficient comprehension of where business logic lives before cutting.

Incremental extraction where justified

Once boundaries are proven in the modular monolith, specific modules with well-understood data and logic ownership can be extracted as independent services through a façade/routing layer. Not every module needs to become a microservice.

The decision to extract should be driven by scaling requirements, deployment independence needs, or team autonomy goals, not by architectural ambition.

Stored procedure handling

Treating all stored procedures as a single migration category is a common planning error. The right approach depends on complexity and risk:

- High-complexity SPs with regulatory implications: incremental extraction with shadow mode validation

- Structurally consistent SPs: candidates for automated conversion to application-layer code

- Stable, low-change SPs: wrap with modern APIs and defer

Comparing modernization approaches

| Approach | What It Means | Best For | Risk Level |

| Modular monolith | Internal restructuring into bounded modules, single deployment | Most tightly coupled systems as a first move | Low |

| Strangler fig to services | Incremental extraction of proven modules behind a façade | Modules with clear data ownership and scaling needs | Moderate |

| Direct microservices | Full decomposition into independently deployed services | Systems already internally modularized | High |

| Full rewrite | Greenfield rebuild of the application | Rarely justified — loss of embedded business logic | Very high |

Continuous validation throughout

Regression testing built from comprehension-phase test cases runs at every transformation step. Behavior parity checks between legacy and modernized systems are woven into the workflow, not treated as a post-migration gate.

For teams practicing incremental modernization, this is what makes each increment safe to ship.

How Legacyleap executes this phase

Instead of relying on generic architecture templates or manual transformation planning, Legacyleap’s agents execute each stage of this workflow grounded in the discovered system state.

The Recommendation Agent suggests modernization paths based on comprehension outputs. The Modernization Agent executes diff-based, human-reviewed transformations. The QA Agent runs automated regression and parity checks using the test framework built during comprehension.

All transformations are reversible and require human review before acceptance.

How Legacyleap Connects the Full Workflow

The agents described in the previous two sections are not five separate tools. They form one workflow where each phase produces outputs the next phase consumes.

The Assessment Agent’s dependency map feeds the Documentation Agent’s rule extraction. The documented rules catalog feeds the Recommendation Agent’s modernization paths. The recommended paths feed the Modernization Agent’s diff-based transformations. The transformation outputs feed the QA Agent’s behavior parity validation.

This connected handoff is what makes the comprehension-to-production sequence operationally real rather than aspirational.

What happens without it

In one case, an insurance company spent $3.4M and 18 months rewriting a VB6 claims processing application, only to discover 47 undocumented business rules embedded in TrueDBGrid event handlers that were invisible to every tool and team member until production [3].

The rules were never extracted. They were never tested. They surfaced as production failures because comprehension and validation were treated as separate concerns rather than a single governed sequence.

How this differs from copilots

Copilots operate at the prompt and file level, which is useful for isolated tasks, but structurally incapable of comprehending cross-cutting dependencies across hundreds of files and multiple layers.

For tightly coupled systems, prompt-level assistance cannot map the business logic distribution that makes these systems dangerous to change.

Legacyleap operates at the full-codebase level with multi-agent orchestration and parity validation built in. This is an architectural distinction, not a feature comparison.

The entry point

The $0 Modernization Assessment produces a dependency and module map, risk indicators, modernization blueprint, architecture observations, effort and timeline ranges, and recommended migration targets at no cost, before any commitment.

For a reader managing a tightly coupled system, this is the lowest-friction entry to the comprehension workflow this article describes.

Modernization Checklist for Tightly Coupled Systems

- Map the full dependency graph across all layers: UI, application, database, integration, batch, and configuration.

- Extract business rules from every location where logic lives, not just the application layer.

- Validate extracted rules with domain experts to separate current logic from obsolete workarounds.

- Reduce scope by eliminating dead code, unused features, and redundant components before transformation begins.

- Define bounded contexts using DDD applied to discovered logic, not assumptions about where logic should live.

- Restructure as a modular monolith first – clean internal boundaries, single deployment, no distribution overhead.

- Extract services incrementally only where scaling, deployment, or team autonomy requirements justify it.

- Validate continuously. Regression and parity checks at every transformation step, not as a post-migration gate.

Comprehension Is the Starting Point

Tightly coupled systems fail modernization when teams start with architecture before comprehension. This sequence of comprehending the full system, reducing scope, defining boundaries from discovered logic, and modernizing incrementally with continuous validation is what turns a high-risk program into a manageable one.

If you are managing a tightly coupled system with scattered business logic and undocumented rules, the first step is not choosing a pattern. It is understanding what the system actually does.

Request a $0 Modernization Assessment → Get a dependency map, risk indicators, and a modernization blueprint for your system — at no cost.

Book a Demo → See Legacyleap Studio and the agent workflows in action.

FAQs

Through a two-track process. Technical extraction identifies rules in code, but domain experts must confirm which rules are current, which are obsolete, and which have been replaced by off-system workarounds. The validated subset becomes the rules catalog that governs transformation. Rules that cannot be validated are flagged for manual review rather than carried forward as assumptions.

Reliable estimates come after comprehension, not before. Until the dependency graph is mapped, dead code is identified, and business rules are cataloged, any estimate is a guess. Scope reduction alone (typically 30–45% of the codebase) changes the timeline materially. Programs that estimate before comprehension consistently overscope and underdeliver.

When incremental refactoring no longer reduces coupling. If changes still cascade across layers after several refactoring cycles, the system needs structural intervention; bounded modules with defined interfaces, not tighter code within the existing architecture. The modular monolith is that structural move: internal separation without the overhead of distribution.

Three conditions: the module owns its data with minimal cross-boundary queries, its business logic is fully contained within its boundary, and it has independent scaling or deployment requirements that justify the operational overhead. If any condition is unmet, the module is better served inside the modular monolith. Extraction without data ownership creates distributed monoliths.

The codebase itself becomes the primary source of truth. Static analysis and dependency mapping reconstruct system understanding from code, while domain expert interviews fill gaps in business intent. This is precisely the scenario where Gen AI-powered comprehension has the highest impact as it can process thousands of files and map cross-layer dependencies at a scale manual discovery cannot match.

References

[1] Fowler, M., & Dehghani, Z. — “How to Break a Monolith into Microservices.” martinfowler.com

[2] Wednesday Solutions — “Business Rule Extraction.” wednesdaysolutions.com

[3] $3.4M Claims Processing Failure — softwaremodernizationservices.com