The Production Gap No One Budgets For

Enterprise modernization programs rarely fail at the assessment stage. Assessments get completed. Strategy decks get delivered. Code gets refactored. And then nothing reaches production.

This is the production gap:

The phase between transformation-complete and production-deployed. It is the least likely phase to be owned, budgeted, or planned for. It is also where modernization programs go to die.

The pattern is documented and widespread. Even federal modernization programs with full funding and multi-year timelines have struggled: a 2025 GAO review found that only 3 of 10 critical systems had been successfully modernized over six years, with 8 of 11 agencies lacking complete modernization plans [1].

These were not under-resourced initiatives. The delivery models governing those programs could not carry the work from comprehension to production.

The instinct is to blame the technology choices or vendor selection. Neither explains the pattern. Programs that select the right stack, hire experienced engineers, and complete thorough assessments still stall at the production boundary.

The common variable is not what gets built. It is how the work is organized across the full lifecycle and who owns the sequence from assessment through deployment.

That is the question this article examines: not “platform or services?” but “who governs the continuous path to production, and is that governance encoded in a system or dependent on which team happens to be staffed?”

Why Services-Led Delivery Is the Default And Where It Structurally Stalls

Services-led delivery is the industry default for good reason. It brings domain expertise, execution capacity, experienced engineers, and the ability to staff complex programs at scale.

For many organizations, services firms are the only viable way to move large application estates forward. That value is real, and the critique here is structural, not personal.

But outcomes are team-dependent by design. When methodology, quality standards, and validation rigor live in people rather than systems, results vary based on who is staffed, how decisions are documented, and whether validation is enforced consistently. This is not a failure of any particular team. It is a feature of the model.

Where Services-Led Modernization Breaks in Practice

The structural limitations surface in predictable places, and each one maps directly to why programs stall.

- Knowledge loss is built into the engagement model. When consulting engagements end or teams rotate, the people who understood the system leave. New teams inherit incomplete context, miss critical requirements, and delay production readiness. Every handoff introduces a gap that must be bridged manually, if it gets bridged at all. The scenario plays out the same way every time: the assessment team that mapped the legacy system exits, a new delivery team inherits a strategy deck and partial documentation, spends weeks rebuilding context that already existed, and the production timeline slips before transformation even begins. This is why programs stall at handoffs.

- Time-based contracts create incentive misalignment. A McKinsey study documented a pharmaceutical company that outsourced migration to system integrators under time-and-materials contracts. The SIs had limited incentive to accelerate delivery. The project ended 75% behind plan at 50% over budget [2]. This is not an isolated incident. It is an incentive structure working as designed.

- Internal accelerators are not portable. Services firms build internal methodologies and accelerators that work for the teams that created them. They rarely transfer across engagements, survive team rotation, or produce auditable outputs outside their original context. The methodology is real; its reproducibility outside specific teams is not.

- Dual-track resourcing is consistently underestimated. Legacy systems must keep running during modernization. The overhead of maintaining production operations alongside active transformation is rarely budgeted adequately. Fortune 100 companies have abandoned multi-million-dollar modernization projects because the cost of running both tracks exceeded what was planned.

| Structural Limitation | Why It Happens | Where It Shows Up |

| Team-dependent outcomes | Methodology lives in people, not systems | Quality variance across engagements |

| Knowledge loss at handoffs | Engagement-scoped context with no system persistence | Delays, rework, missed requirements |

| Incentive misalignment | T&M contracts reward duration, not outcomes | Timeline drift, budget overruns |

| Non-portable accelerators | Built for specific teams and contexts | Inconsistent repeatability |

| Dual-track budget gaps | Parallel legacy operations underestimated | Program abandonment or scope reduction |

None of this means services-led delivery is wrong. It means services-led delivery has a structural ceiling, and that ceiling sits precisely at the production boundary where comprehension must survive team transitions and validation must be enforced consistently.

Pilot Purgatory: Why Assessments Never Become Production

Pilot purgatory is the state where an organization runs successive assessments, proofs of concept, and limited-scope pilots without ever shipping a modernized system to production.

Each initiative demonstrates technical feasibility in isolation but fails to cross the boundary into deployed, operational software.

The pattern is self-reinforcing because every new pilot resets the timeline, consumes budget, and produces artifacts that inform the next pilot rather than the next deployment.

There is a name for what happens when the production gap becomes chronic: pilot purgatory. Organizations run successive assessments, proofs of concept, and limited-scope pilots without ever shipping modernized systems to production. The cycle is predictable and widespread.

The pattern works like this:

- A consulting engagement produces an assessment and strategy deck.

- A pilot demonstrates technical feasibility on a low-risk application.

- And then the initiative stalls.

Production success criteria were never defined up front. No single owner carries the work from discovery through deployment. The organizational changes required for adoption were never scoped or budgeted.

IDC research puts a number on it: 88% of proofs of concept never reach production. For every 33 launched, only 4 make it to a deployed system [3].

The numbers are stark, but the mechanism matters more than the statistic.

Pilot purgatory persists because of a definitional confusion that enables it. POC, pilot, and production deployment are used interchangeably in vendor proposals and internal reporting.

This lets organizations report success at stages with no business consequences. An assessment that produces a strategy deck is counted as progress. A pilot that demonstrates feasibility on a low-risk application is reported as validation. Neither moves the enterprise closer to production.

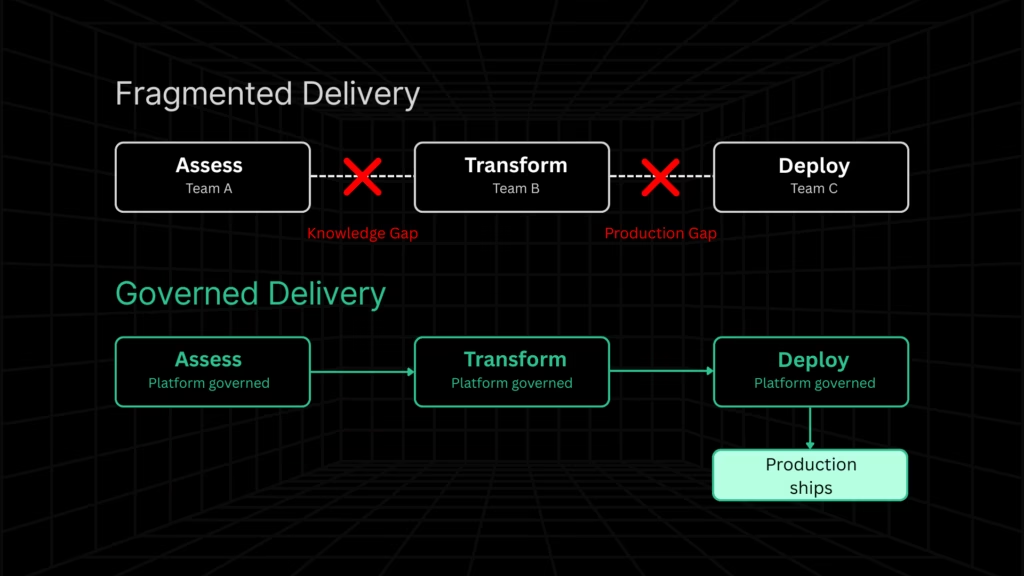

The connection to the delivery model is direct. Pilot purgatory is the structural consequence of modernization, organized as disconnected phases like assess, transform, and deploy, owned by different teams under different contracts.

Each handoff introduces a gap. No single entity owns the continuous path to production. This is not a people problem. It is a delivery architecture problem.

How to Break Out of Pilot Purgatory

Breaking the cycle requires structural changes to the delivery model, not better project management within the existing one:

- Define production as the success metric from day one. If the engagement’s deliverable is a report, a strategy deck, or a pilot, it is not a modernization program. It is a pre-modernization exercise. Name it accordingly and budget for what comes after.

- Assign lifecycle ownership to a single system or team. The path from assessment to production cannot be distributed across disconnected contracts with different owners. Someone, or something, must carry continuity across every phase.

- Budget for deployment and organizational change, not just transformation. Transformation without deployment artifacts, cutover plans, and change management is incomplete work. If the budget ends at code refactoring, the program ends at code refactoring.

Require production-ready outputs as standard deliverables. Validated, deployment-compatible artifacts should be the baseline expectation for any modernization engagement.

Your modernization assessment should initiate a governed path to production, not end with a report.

What Platform-Led Delivery Owns And the Standard It Must Meet

The services-led ceiling and the pilot purgatory pattern point to the same structural requirement: modernization needs a governed system that owns the lifecycle from comprehension through production-readiness.

That is what platform-led delivery means when the term is used precisely.

What Is Platform-Led Modernization?

Platform-led modernization is a delivery model where the complete modernization lifecycle is encoded in a persistent system rather than distributed across teams, contracts, or consulting engagements.

The platform governs the sequence from assessment to production. Methodology, quality standards, and validation rigor are system properties, not team-dependent variables.

What persists between engagements is not documentation or tribal knowledge. It is the platform itself.

Where Platform-Led Modernization Falls Short

The honest ceiling matters here, and acknowledging it is what separates credible platform claims from vendor theater.

Automated code transformation handles roughly a third of the work in a complex modernization project. The remaining two-thirds in stakeholder alignment, data migration, organizational change, regulatory interpretation, and complex architectural judgment requires human expertise.

Platforms do not replace experienced engineers. They reduce the volume of manual comprehension and transformation work, freeing those engineers to focus on judgment-intensive tasks.

Any platform vendor that claims to automate the full lifecycle is either overpromising or defining the lifecycle too narrowly. The platform’s role is to govern the path and reduce the manual burden. The human role is to exercise judgment where automation cannot.

Research from Forrester validates the value of governed delivery: partner-led migrations complete on time at 71%, compared to 49% for organizations attempting modernization without structured delivery governance [4].

The platform does not do everything. It governs the path so that everything gets done.

What Production-Ready Modernization Actually Looks Like

Most modernization content avoids defining what production-ready output actually looks like. This is the gap that lets vendors claim “modernization” for work that ends at transformation.

Production-ready means a modernized system can be deployed safely, operated reliably, and maintained independently. That requires four categories of output, all present:

| Output Category | What It Contains | The Test |

| Code artifacts | Refactored source code in the target stack, clean project structures that compile and build, configuration files, environment bindings, infrastructure templates | Does the code build, run, and integrate with the target environment without manual assembly? |

| Validation artifacts | Behavior-parity evidence, regression test suites covering critical business flows, edge-case documentation for high-risk logic | Can you prove the modernized system produces equivalent outputs to the legacy system? |

| Deployment artifacts | CI/CD pipeline integration, cutover plans with rollback strategies, staged rollout options, operational runbooks | Can the system be deployed to production with a defined rollback path? |

| Documentation artifacts | Reconstructed business logic documentation, dependency maps reflecting the modernized architecture, architecture decision records | Can a new engineer understand the system and make safe changes without the original team? |

If a vendor delivers refactored code but not these four categories, they delivered a transformation, not a modernization. This distinction is the difference between code that exists and a system that runs in production.

How to Evaluate Any Vendor Against This Standard

The production-readiness standard turns into a practical evaluation lens. Patterns that signal structural risk and patterns that signal production-readiness are both visible before a contract is signed.

Red flags:

- Assessment and production delivery are separate SOWs with different teams

- Validation is described as “QA support” rather than a governed lifecycle phase

- Deployment readiness is not mentioned in the proposal

- Case studies describe assessments completed, not systems deployed

Positive signals:

- Transformation outputs include deployment artifacts, not just refactored code

- Validation is built into the transformation workflow, not bolted on afterward

- The engagement model includes production-readiness milestones

- Knowledge is encoded in a platform that persists beyond the engagement

Key questions to ask any vendor:

- Who owns the sequence from assessment to production deployment – one team, or a handoff?

- What deployment artifacts are included in the standard engagement?

- How is validation enforced – standard output, or discretionary activity?

- What happens to institutional knowledge when team members rotate?

- Is pricing time-based or outcome-based?

For a broader view of how modernization platforms compare on lifecycle ownership, see our analysis of application modernization platforms and providers in the USA.

Platform-Led vs Services-Led vs Hybrid: How the Models Compare

Before moving to how Legacyleap addresses these patterns specifically, here is how the three delivery models compare across the dimensions the article has diagnosed:

| Dimension | Platform-Led | Services-Led | Hybrid (Platform-Governed) |

| Methodology | Encoded in the system | Carried by people | System-governed, people-amplified |

| Consistency | High — repeatable across engagements | Variable — depends on team staffing | Balanced — platform sets the floor |

| Production ownership | Partial — limited by automation ceiling | Fragmented — handoffs between phases | Continuous — platform governs, services execute |

| Knowledge persistence | Lives in the platform | Leaves with the team | Encoded in the platform, enriched by teams |

| Validation | Governed lifecycle phase | Discretionary or bolted on | Governed phase with human review |

| Primary failure mode | Automation ceiling on complex judgment | Knowledge loss and timeline drift | Coordination overhead between platform and services |

| Best suited for | Scale and repeatability | Deep complexity and domain expertise | Enterprise modernization at production scale |

The hybrid model is the recognition that platforms own the lifecycle and services own the judgment that the lifecycle requires. Production happens when both operate within a governed system, not when they are procured separately and stitched together after the fact.

How Legacyleap Governs the Path from Assessment to Production

If modernization requires continuous lifecycle ownership, then the delivery system must persist beyond any individual team, contract, or engagement.

It must encode methodology in a system that carries comprehension, transformation, validation, and deployment-readiness as governed phases rather than discretionary activities.

That is the gap most delivery models cannot solve. Legacyleap is built to solve it.

Lifecycle Governance Through Five Orchestrated Agents

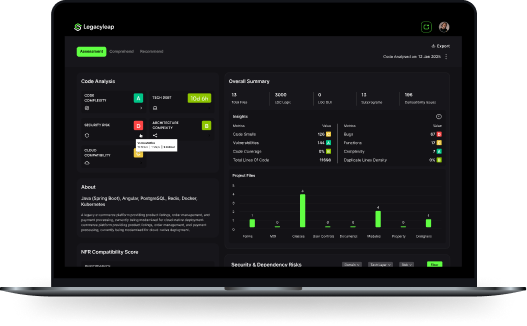

Legacyleap governs the modernization lifecycle through five agents, each responsible for a distinct phase of the path from comprehension to production-readiness. The methodology is encoded in the platform. It does not depend on which team is staffed or how long an engagement lasts.

Each agent addresses a specific failure mode that the article has diagnosed:

- The Assessment Agent and Documentation Agent encode system comprehension so knowledge survives team rotation. Dependency maps, business rule extraction, and architecture documentation are platform outputs, not consulting deliverables that leave when the engagement ends.

- The Modernization Agent produces diff-based, human-reviewed transformations that maintain consistency regardless of who reviews them. Every change is visible, reversible, and traceable to the original codebase.

- The QA Agent validates behavior parity as a governed lifecycle phase. This is not discretionary QA support bolted onto the end of an engagement. It is an encoded step that runs before any transformation is accepted.

The $0 Assessment as the Entry Point That Breaks Pilot Purgatory

The $0 Assessment is designed to produce the comprehension artifacts that make everything downstream predictable:

- dependency and module maps,

- risk indicators,

- modernization blueprint,

- architecture observations,

- effort and timeline ranges, and

- recommended migration targets.

These are the structured inputs that a governed production path requires. The assessment initiates that path. It does not conclude with a report.

How Legacyleap Works with Services Partners

The hybrid model the evidence supports is not “platform or services.” It is a platform-governed lifecycle with services operating within the governed system.

Platform governs comprehension, transformation, validation, and deployment-readiness. Services teams, internal or external, bring scale, domain expertise, stakeholder alignment, organizational change management, and regulatory navigation.

Partners amplify the platform. The platform governs the path to production.

Legacyleap does not replace services. It makes services more effective by encoding the lifecycle in a system that persists beyond any single engagement.

Governance and Engineering Control

All transformations are diff-based and require human review before acceptance. AI cannot merge, deploy, or execute code directly. Full-codebase comprehension precedes any transformation.

This governance model is architecturally different from copilots and IDE plugins, which operate at the prompt or file level without system-level comprehension, multi-agent orchestration, or parity validation. The distinction is not about capability. It is about what the system is designed to own.

The Delivery Model Is the Decision

The delivery model is the variable that determines whether modernization reaches production. Not the technology, and not the team’s skill level.

Services add capacity. Platforms add continuity. Production requires both, with the platform governing the lifecycle.

- Platform-led governance for comprehension, transformation, validation, and deployment-readiness.

- Services for organizational change, stakeholder alignment, complex architectural judgment, and domain-specific expertise.

Both share accountability for production outcomes, measured by what is deployed, not what is assessed.

For enterprises working through the cost and timeline implications of this decision, our breakdown of modernization cost in 2026 covers ROM estimation, hidden cost categories, and how delivery model choice affects budget predictability.

The first step before choosing a vendor is understanding your system. A structured modernization assessment that produces concrete comprehension artifacts is the lowest-risk way to determine scope, effort, and which delivery model fits your situation.

Request a $0 Modernization Assessment – a no-cost exercise that produces the comprehension artifacts needed to make an informed delivery model decision and initiate a governed production path.

Book a Demo – see how Legacyleap governs the modernization lifecycle in practice: Studio, agents, diff-based review, and validation workflows.

FAQs

The entity that owns the modernization lifecycle should own deployment readiness. When deployment is handed off to a separate team that was not involved in comprehension or transformation, critical context is lost and production timelines slip. The most reliable model assigns lifecycle ownership to a platform or a single accountable team that carries the work from assessment through deployment, with services partners contributing domain expertise within that governed path.

Warning signs include: completed assessments that haven’t progressed to transformation in over six months, multiple pilots on low-risk applications with no production deployment planned, rotating teams that spend weeks rebuilding context, and internal reporting that counts assessments or POCs as modernization progress. If budget is being consumed but no system is closer to production than it was a quarter ago, the project is likely in pilot purgatory.

Services-led models are most vulnerable to timeline drift because outcomes depend on team continuity, and time-based contracts create limited incentive to accelerate. Platform-led models compress timelines for comprehension and transformation but cannot accelerate organizational change or stakeholder decisions. Hybrid models where the platform governs the lifecycle and services handle judgment-intensive work produce the most predictable timelines because the automated phases stay on track regardless of staffing changes.

Ask whether the platform operates at the system level or the file level. Platforms that require full-codebase ingestion, produce dependency maps, and generate validation artifacts across modules are designed for complex systems. Platforms that work on isolated files or repositories without cross-module context will struggle with tightly coupled logic, undocumented dependencies, and multi-language codebases. The $0 Assessment is designed to answer this question concretely for a specific system.

Ask for case studies that describe deployed systems, not completed assessments or refactored codebases. Request specifics: what deployment artifacts were produced, how behavior parity was validated, and whether the modernized system is currently running in production. Vendors that can only reference assessments delivered or code transformed without evidence of production deployment are demonstrating the exact production gap this article describes.

The transformed code sits in a repository without deployment artifacts, validation evidence, or cutover plans. No one is accountable for bridging the gap between refactored code and a running system. Over time, the transformed codebase drifts from the legacy system still in production, dependencies shift, and the window for safe deployment narrows. Eventually, the organization either restarts the effort or abandons modernization of that system entirely.

References

[1] U.S. Government Accountability Office, “Critical Federal IT Systems Modernization Review,” 2025. https://www.gao.gov/products/gao-25-106741

[2] McKinsey & Company, “Cloud Migration: Avoiding Cost Overruns in Large-Scale Transformations.” https://www.mckinsey.com/capabilities/mckinsey-digital/our-insights/clouds-trillion-dollar-prize-is-up-for-grabs

[3] IDC, “Proof of Concept to Production: Why Most POCs Never Scale.” https://www.idc.com/

[4] Forrester Research, “Partner-Led Migration Completion Rates and Delivery Model Impact.” https://www.forrester.com/